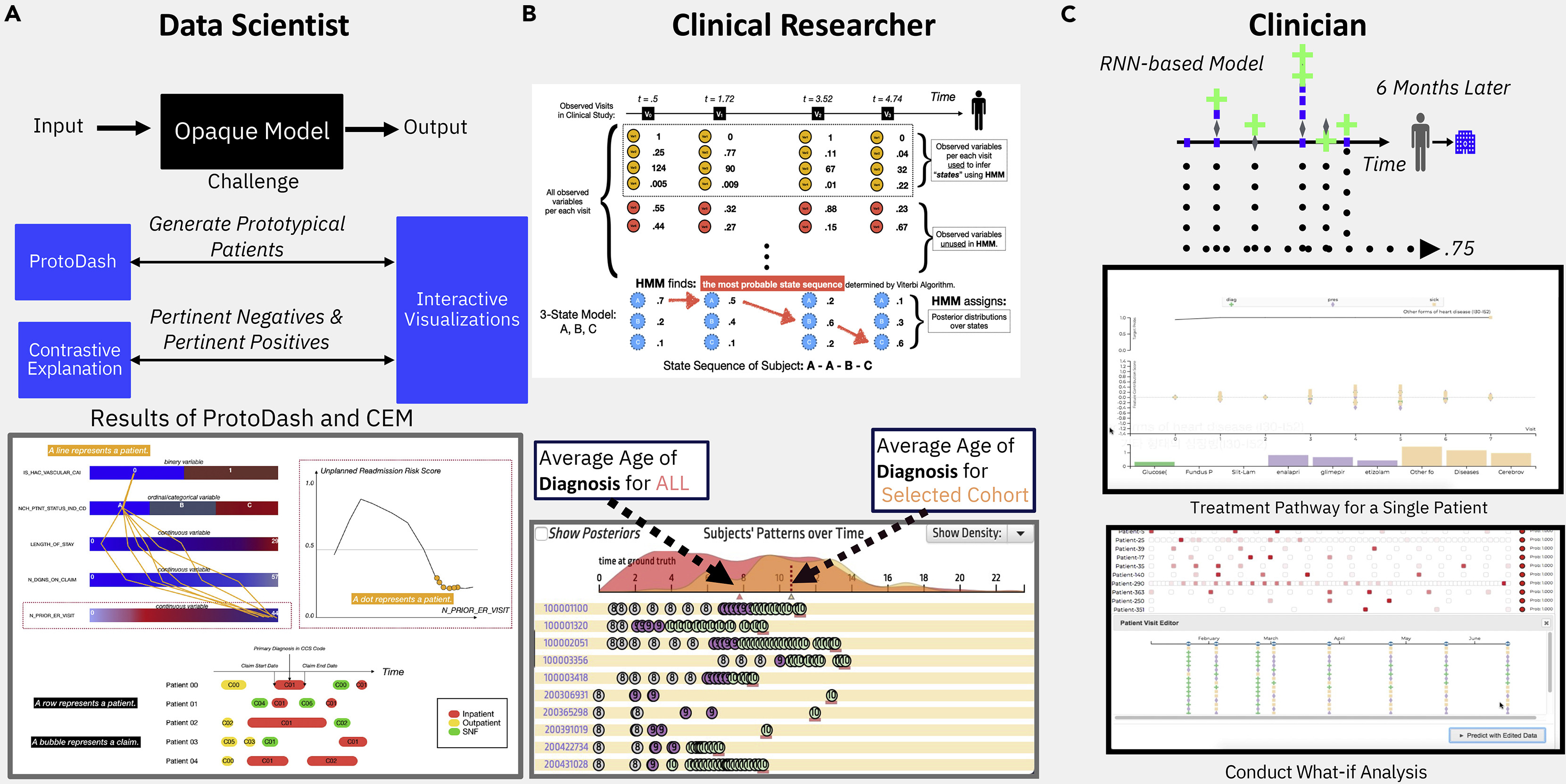

This paper provides an overview of explainability in AI (XAI), clinical research examples that leverage the XAI techniques, and visualizations that enhance the explanations. This figure shows three personas of clinical users and visualization examples that support each of the three for their clinical research activities.

This paper provides an overview of explainability in AI (XAI), clinical research examples that leverage the XAI techniques, and visualizations that enhance the explanations. This figure shows three personas of clinical users and visualization examples that support each of the three for their clinical research activities.

Abstract

Rapid advances in artificial intelligence (AI) and availability of biological, medical, and healthcare data have enabled the development of a wide variety of models. Significant success has been achieved in a wide range of fields, such as genomics, protein folding, disease diagnosis, imaging, and clinical tasks. Although widely used, the inherent opacity of deep AI models has brought criticism from the research field and little adoption in clinical practice. Concurrently, there has been a significant amount of research focused on making such methods more interpretable, reviewed here, but inherent critiques of such explainability in AI (XAI), its requirements, and concerns with fairness/robustness have hampered their real-world adoption. We here discuss how user-driven XAI can be made more useful for different healthcare stakeholders through the definition of three key personas—data scientists, clinical researchers, and clinicians—and present an overview of how different XAI approaches can address their needs. For illustration, we also walk through several research and clinical examples that take advantage of XAI open-source tools, including those that help enhance the explanation of the results through visualization. This perspective thus aims to provide a guidance tool for developing explainability solutions for healthcare by empowering both subject matter experts, providing them with a survey of available tools, and explainability developers, by providing examples of how such methods can influence in practice adoption of solutions.